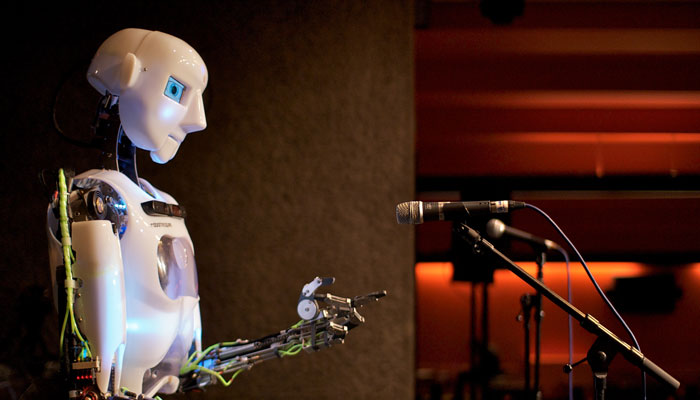

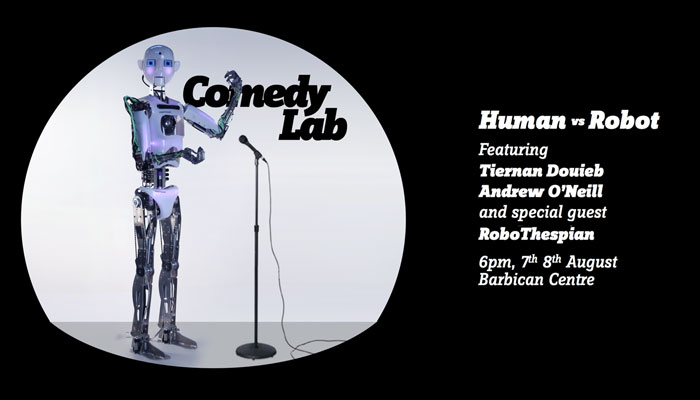

an email comes in from a performance studies phd candidate asking if they could watch the whole robot routine from comedy lab: human vs. robot. damn right. i’d love to see someone write about that performance as a performance.

but, better than that staging and its weird audiences (given the advertised title, robo-fetishists and journalists?) there is comedy lab #4: on tour, unannounced. the premise: robot stand-up, to unsuspecting audiences, at established comedy nights. that came a year later with the opportunity to use another robothespian (thanks oxford brookes!). it addressed the ecological validity issues, and should simply be more fun to watch.

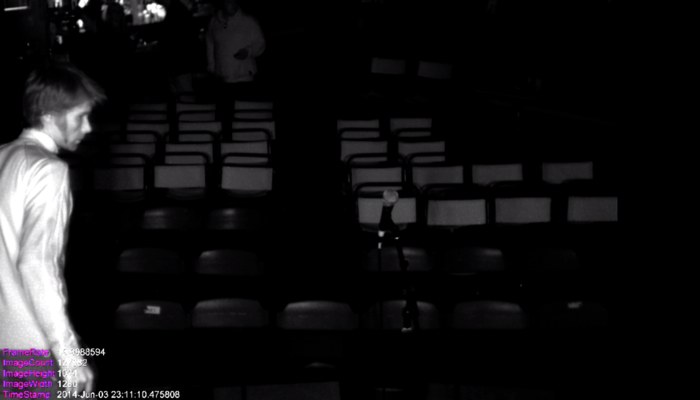

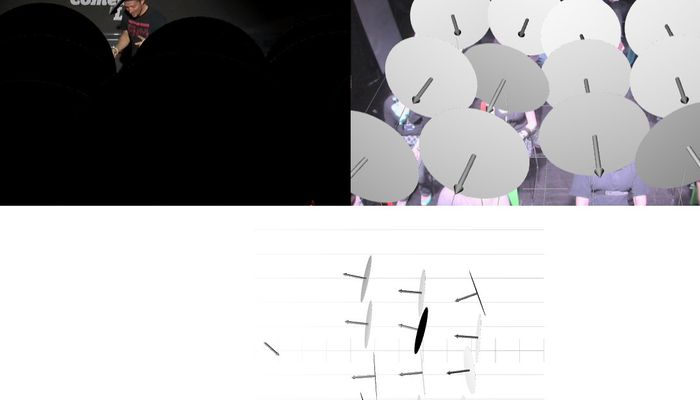

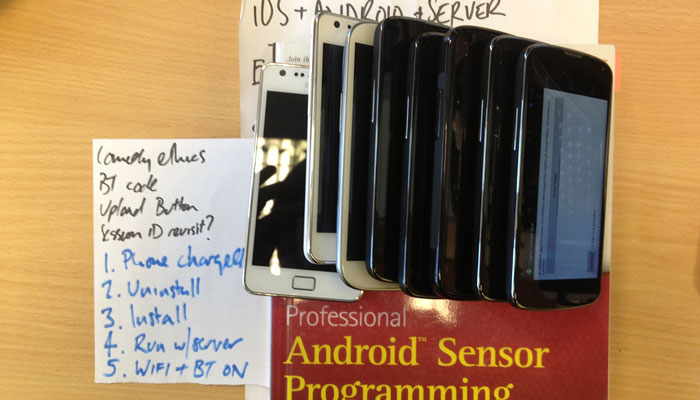

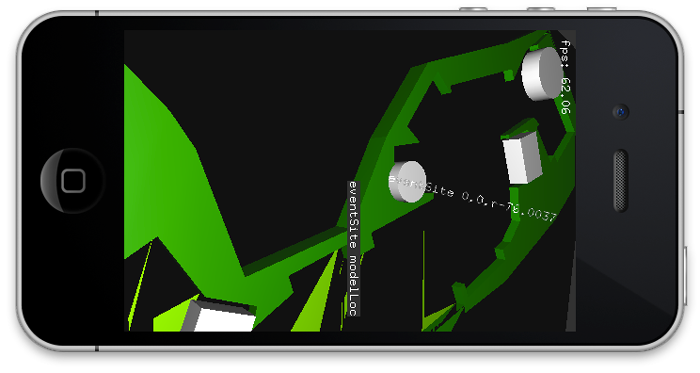

for on tour, unannounced we kept the performance the same – or rather, each performance used the same audience responsive system to tailor the delivery in realtime. there’s a surprising paucity in the literature about how audiences respond differently to the same production; the idea was this should be interesting data. so i’ve taken the opportunity to extract from the data set the camera footage of the stage from each night of the tour. and now that is public, at the links below.

the alternative comedy memorial society

gits and shiggles

angel comedy

the robot comedy lab experiments form chapter 4 of my phd thesis ‘liveness: an interactional account’

Four: Experimenting with performance

The literature reviewed in chapter three also motivates an experimental programme. Chapter four presents the first, establishing Comedy Lab. A live performance experiment is staged that tests audience responses to a robot performer’s gaze and gesture. This chapter provides the first direct evidence of individual performer–audience dynamics within an audience, and establishes the viability of live performance experiments.

there are currently two published papers –

-

robot stand-up: engineering a comic performance

http://tobyz.net/diary/2014/11/robot-standup-workshop-paper -

robot comedy lab: experimenting with the dynamics of live performance

http://tobyz.net/diary/2015/08/robot-standup-journal-paper

and finally, on ‘there is a surprising paucity…’, i’d recommend starting with gardair’s mention of mervant-roux.

- c. gardair, assembling audiences. queen mary university of london, 2013.

http://qmro.qmul.ac.uk/jspui/handle/123456789/8486