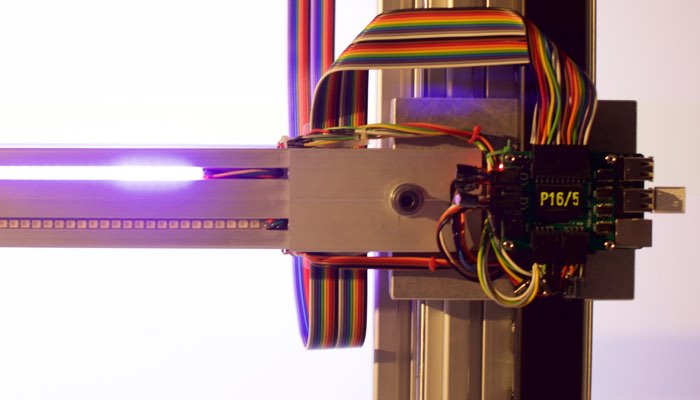

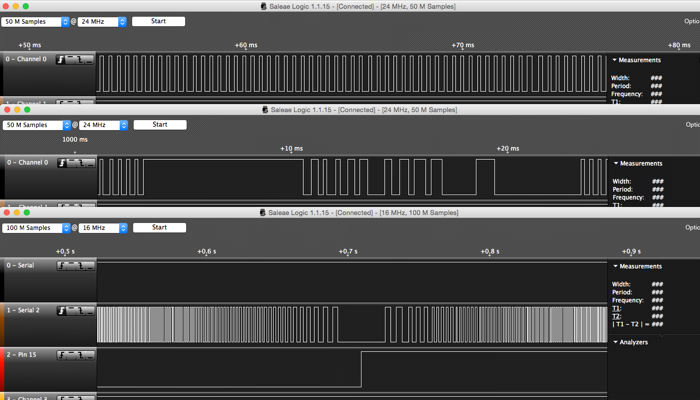

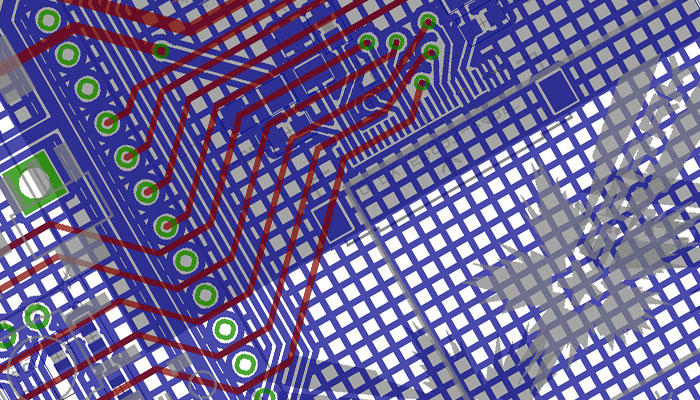

in the midst of those debug wires was a logic analyser that hooks up to a desktop app. best thing ever – thanks again to ninja friend Arron Smith. using the logic probe to examine the control signals, i stripped down the control script to create a constant turn and the result is not good. stepper pulses, top: looks even. stepper pulses, middle: hell no. that’s just a little later in the same sample.

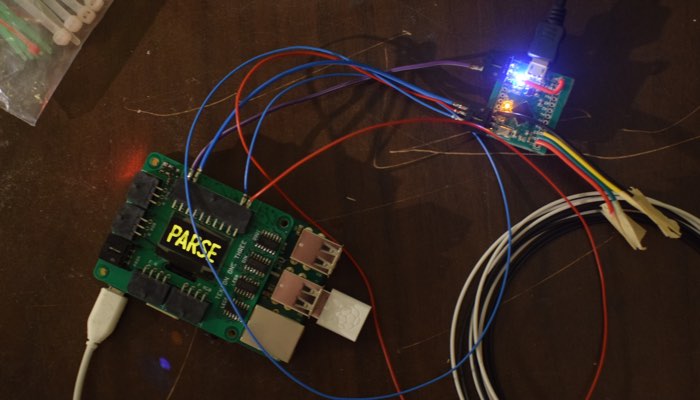

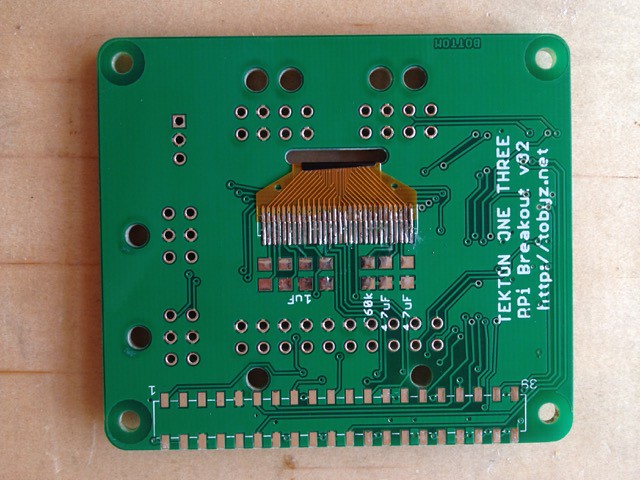

no wonder the motor doesn’t have smooth motion, with the shuddering action making horrible noises and vibrations through the structure. realisation one is that it’s not possible to get the timing precision to get near the pulse frequency to drive the motor at a decent speed, and realisation two is that the timing accuracy is just not there. running this machine from a python script on a raspberry pi with it’s stock linux installation was an experiment, and this is where “let’s try the future” fell down. simply, it’s not a realtime environment. however, a bare bones sketch on an arduino, flipping a digital out between delaymicroseconds() of 5 and up did work beautifully. somehow a middle-ground had to be reached.

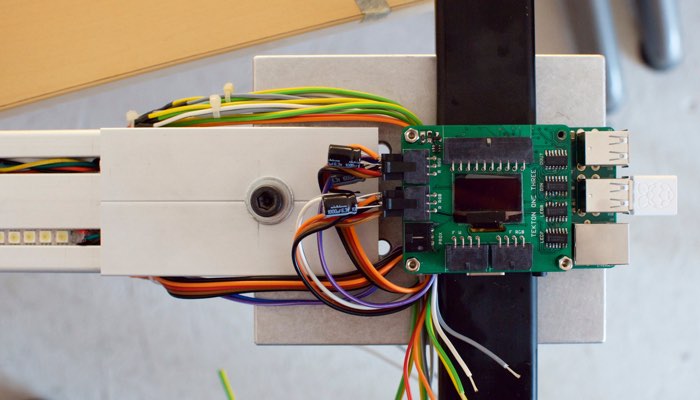

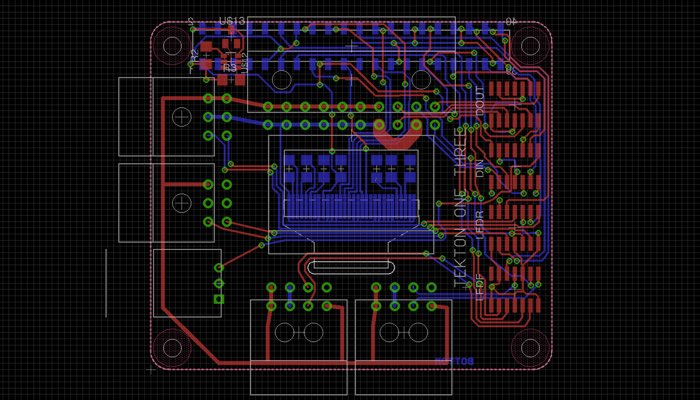

the first stage of that was to simulate the 60fps commands of the driving animation while being a stand-alone program. a sin calculation also takes ~120µs on an arduino, considerably longer than the minimum pulse time of around 5-10µs. the beautiful step signal shown bottom is the output of calculating sin(time) every 1/60s, and for every loop in-between, calculating a linear interpolation between the last two sin points, comparing that with the current position, and issuing a step and/or direction change if needed.

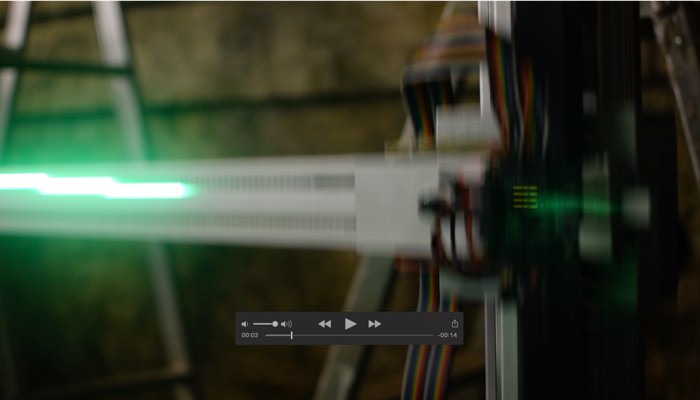

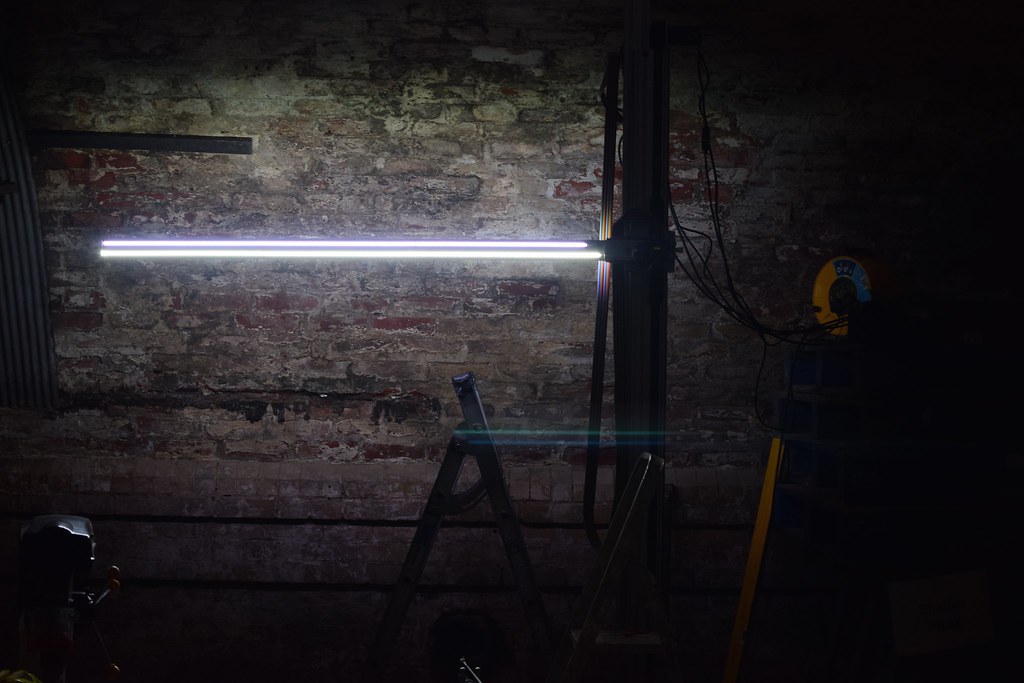

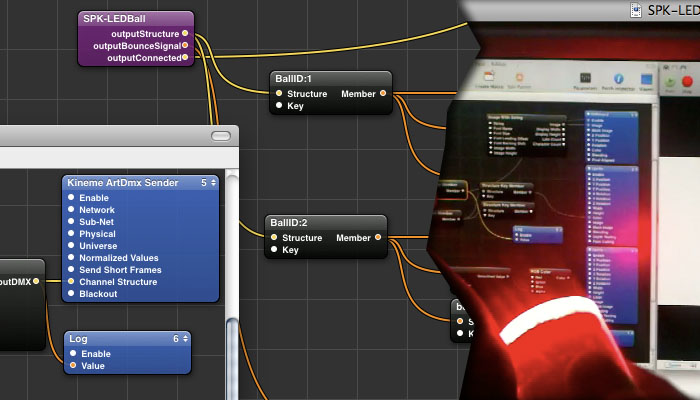

later, in the down-time while shipping and the gallery install, i’d spent time working through options to drive the motor better. the machine would run smoothly – purr, even – if i drove it with something known-smooth like a sine wave, but whenever i switched from this a problem appeared. the intention had always been to add some kind of inertial smoothing to the control, so that whatever input the controller took would translate into something that was mechanically viable for the machine. accelerating the led bar puts torque on the linear bearing, and the weight of the whole assembly will simply stall a stepper motor pulsed from still to a constant turn. the answer should be to employ a physical model in the code, which with a little research the accelstepper library effectively does. you can command a step position, and it will take the motor there within a defined envelope of speed and acceleration. every stepper project should use this… except it turns out the maths is too computationally expensive to achieve anything close to stepping fast enough for this project on an 8mhz arduino.

problem was, any other strategy i tried in my own stepper driving code proved insufficient – i had high hopes for a simple median filter. this may have been partly due to the other side of driving the stepper smoothly: the microsecond consistency of pulse timings (as discovered originally, trying to drive direct from the pi). i suspect the serial read of commanded step position from the pi was interfering with this and/or disrupting the exact regularity between received commands that the linear interpolation relied upon.

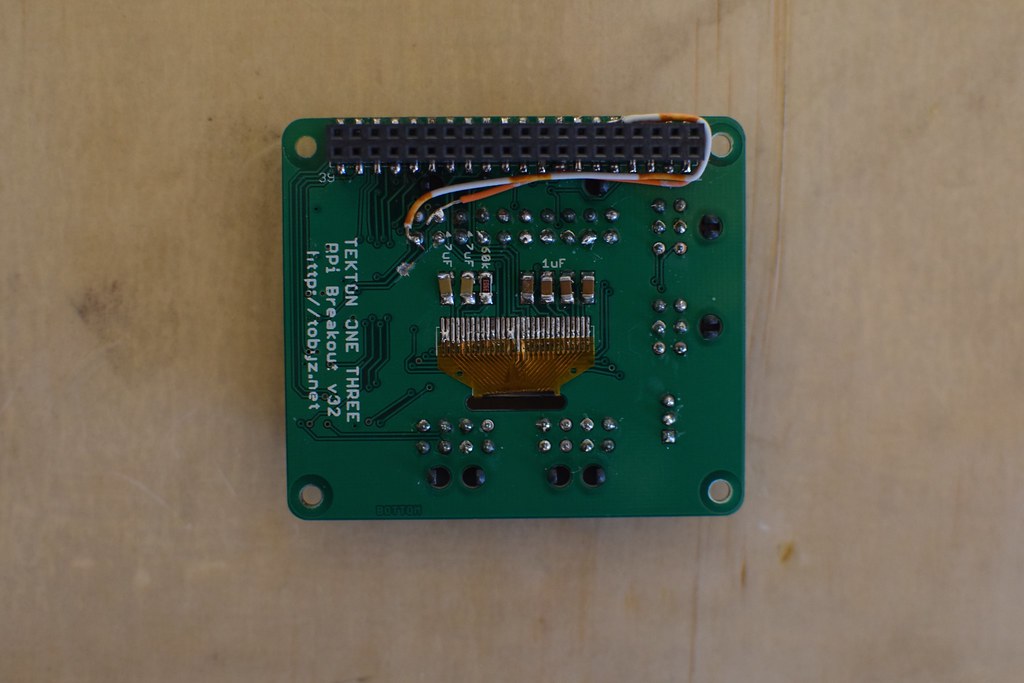

in hindsight, two things stand out. one is that the raspberry pi is incredibly fast and powerful compared to the arduino, so running such a library fast enough would present no issue, if it ran a realtime operating system akin to the arduino. this required more linux-fu than i had headspace for at the time, but is surely the correct way to go. the other is a little more embarrassing, in that research since has shown the stepper driver – the hardware box that takes the logic level step pulses and makes it so – has an optional smoothing filter that is configurable by software. i never got to this, as mentally the driver was sold as not needing software configuration, the very point being it was pre-tuned for this series of motors, and physically, the interface cable was bespoke and i didn’t buy it. just perhaps breaking that mental mould to make up that cable could have saved me nights worth of grief.

to cut back to sitting in front of the machine with the opening in hours not days, anything that wasn’t that sinusoidal self-driving arduino sketch was causing horrible, horrible sounds (something like a resonant frequency in the half-coupling and splined shaft that slotted into the pulley), and so running sinusoidal was what it was going to be.

and to underline just how crazy the whole motor episode was, simply adding a debug line to send the current position of the motor to the serial console when the belt mid-point marker passes the sensor caused mechanical vibrations that made you want to turn the whole thing off. that’s how sensitive the pulse timings are. throughout, it has been the worst case of observing changing the observed.